Median formula12/8/2023  When b.percentile = 10 then cast(b.high as decimal(18,2))Įlse cast((a.low + b.high) as decimal(18,2)) / 2 (select min(my_column) as, max(my_column) as, percentile (select my_column, ntile(10) over (order by my_column) as I've amended the answer to follow the excellent suggestion from Robert Ševčík-Robajz in the comments below: with PartitionedData as When you stick that into an explain plan, 60% of the work is sorting the data which is unavoidable when calculating position dependent statistics like this. If you really only want one row that is the median then uncomment the where clause. This will give you the median and interquartile range in one fell swoop. My original quick answer was: select max(my_column) as, quartileįrom (select my_column, ntile(4) over (order by my_column) as RowAsc IN (RowDesc, RowDesc - 1, RowDesc + 1) ORDER BY TotalDue DESC, SalesOrderId DESC) AS RowDesc

ORDER BY TotalDue ASC, SalesOrderId ASC) AS RowAsc, SalesOrderId in the ORDER BY is a disambiguator to break ties Note the use of a unique column as a disambiguator in case there are multiple rows with the same value of the median column.Īs with all database performance scenarios, always try to test a solution out with real data on real hardware – you never know when a change to SQL Server's optimizer or a peculiarity in your environment will make a normally-speedy solution slower. That page also contains a discussion of other solutions and performance testing details. This is a particularly optimal solution when it comes to actual I/Os generated during execution – it looks more costly than other solutions, but it is actually much faster. Here's one particularly well-optimized solution, from Medians, ROW_NUMBERs, and performance. There are lots of ways to do this, with dramatically varying performance. It's possible that this disparity has been improved in the 7 years since, but personally I wouldn't use this function on a large table until I verified its performance vs. I'd also be especially careful using the (new in SQL Server 2012) function PERCENTILE_CONT that's recommended in one of the other answers to this question, because the article linked above found this built-in function to be 373x slower than the fastest solution. If perf is important for your median calculation, I'd strongly suggest trying and perf-testing several of the options recommended in that article to make sure that you've found the best one for your schema. Of course, just because one test on one schema in 2012 yielded great results, your mileage may vary, especially if you're on SQL Server 2014 or later. DECLARE BIGINT = (SELECT COUNT(*) FROM dbo.EvenRows) Note that this trick requires two separate queries which may not be practical in all cases. This solution was 373x faster (!!!) than the slowest ( PERCENTILE_CONT) solution tested. This article found the following pattern to be much, much faster than all other alternatives, at least on the simple schema they tested.

Take a look at this article from 2012 which is a great resource: Net-net, my original 2009 post is still OK but there may be better solutions on for modern SQL Server apps. SQL Server releases have also improved its query optimizer which may affect perf of various median solutions. Also, SQL Server releases since then (especially SQL 2012) have introduced new T-SQL features that can be used to calculate medians.

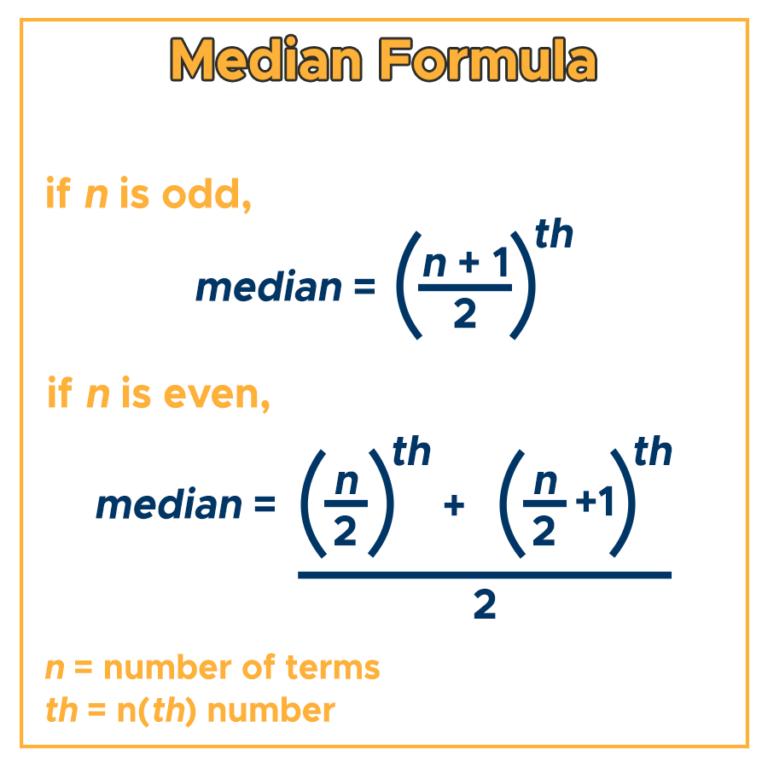

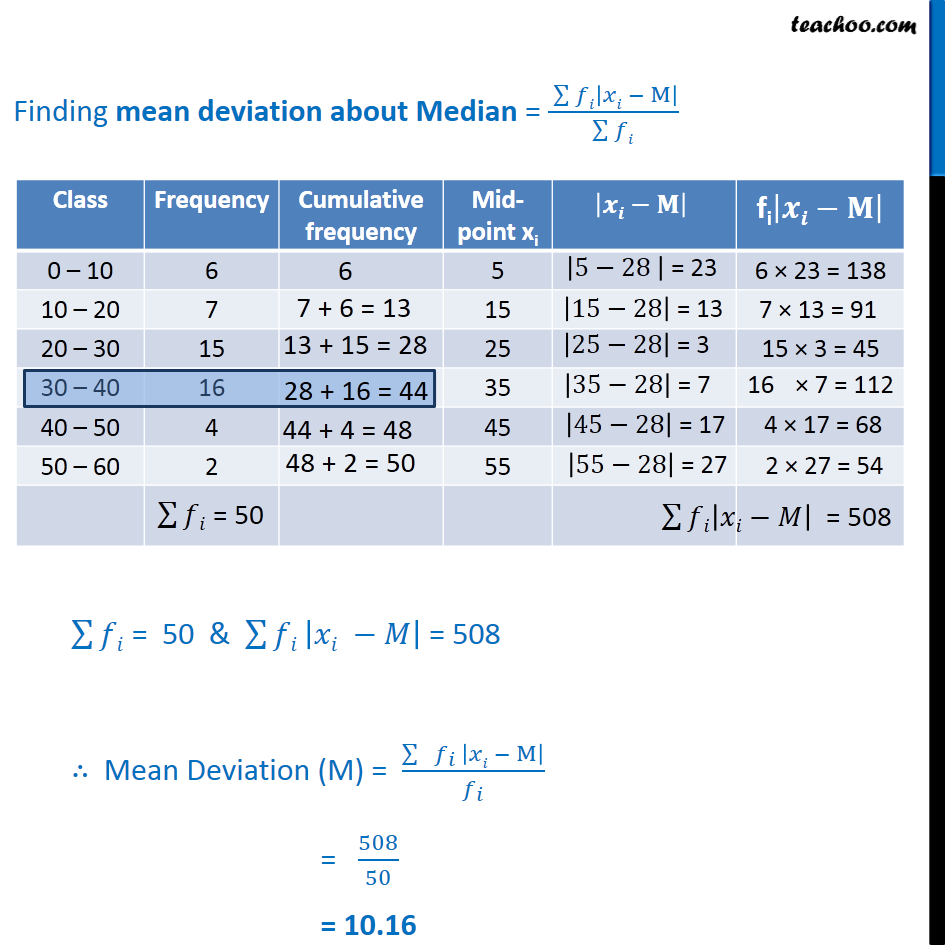

I've been trying to calculate median but still I've got some mathematical issues I guess as I couldn't get the correct median value and couldn't figure out why.2019 UPDATE: In the 10 years since I wrote this answer, more solutions have been uncovered that may yield better results.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed